Max Chantha | November 7, 2015

WASHINGTON – While an automated policing system that predicts where and when crime will occur sounds like an Orwellian fantasy, it may be closer to reality than we think.

Seattle’s fledgling Real Time Crime Center (RTCC) is an automated system that aims to predict crimes based on merging historical metadata regarding crimes, 911 calls, and geography, but critics believe that is has the potential to sink America into a technology-fueled police state.

The Seattle Police Department is experimenting with the new system, and although it is not fully operational, the workings of its predictive software have been unveiled to the media.

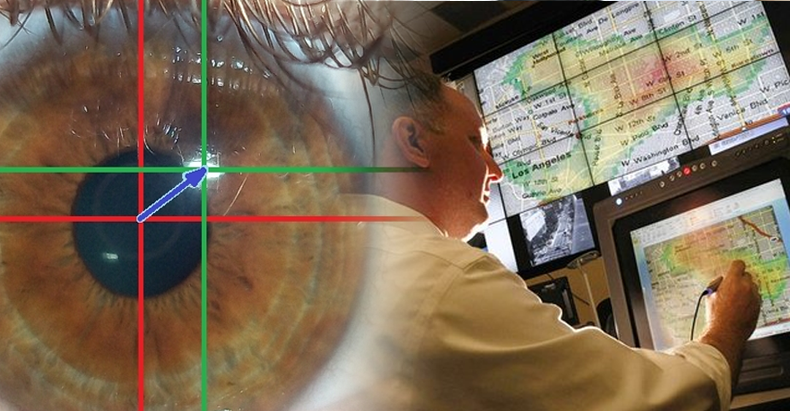

The system depicts, on large screens, where patrol cars are in real time, and the aggregated crime of certain areas.

It calculates this based on 911 calls from the area, different crimes, whether they have increased or decreased in the area, and other relevant factors.

The system has been described, by the department’s own COO Mike Wagers, as an attempt to ‘forecast’ crime.

While the logic behind the system may seem sound – using past data to predict where crime has and will likely continue to occur – several critics, including the ACLU, have pointed out its capacity for inaccuracy and embellishment.

The data used by RTCC may not be entirely comprehensive enough to accurately predict where crime will occur.

Neighborhoods of color are far less likely to call the police when crime occurs due to mistrust of the police – thus such an area may be heavily crime ridden, but will be underrepresented in the RTCC system.

It is also conceivable that a large number of arrests are superfluous or racially motivated, meaning that areas where people are often harassed by the police will only receive further scrutiny, when they may in fact be safe from any dangerous crime.

Perhaps the most concerning issue with the RTCC is the precedent it sets.

The neighborhoods we live in may be policed – and the police work executed – according to the informational output of a machine.

While the police are humans and therefore fallible, they can experience empathy, understanding of nuance, or other inherently human abilities.

These humanizing cognitive skills do not exist in computers.

It is a slippery slope from systems like the RTCC to drones and mass surveillance systems – both strategies that Seattle PD has tried to execute in the past.

In a sense, the reins of policing strategy and implementation will be placed in the hands of logical but unthinking machines that do not feel empathy and rely on data inputs by fallible humans.

The possibility exists that it will become a robotic extension of the flawed and controlling policing structure created by authoritarian humans, and instead of removing human error from the equation solidify its role in policing.

Max Chantha is a writer and investigative journalist interested in covering incidences of government injustice, at home and abroad. He is a current university student studying Global Studies and Professional Writing. Check out Max Chantha: An Independent Blog for more of his work.